Artificial intelligence now sits inside credit approvals, content filters, insurance pipelines, and hiring systems. These tools sort people and actions into risk categories based on data patterns. That speed brings reach, but it also strips away the surrounding story. A missed payment, a job gap, or a sharp spending shift becomes a red flag without any explanation attached. Research from MIT and Stanford shows that statistical scoring treats disruption as a trait rather than a moment. The model does not register why something happened, only that it did.

Credit Scoring Engines Trained On Repayment Data Rather Than Financial Reality

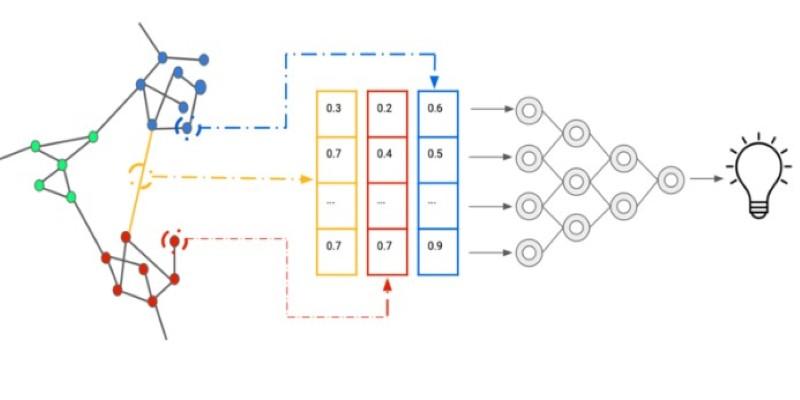

Modern credit risk platforms like FICO Decision Management and Zest AI run on large sets of historical repayment records. Banks use these systems to automate approvals across millions of applications. The operational goal is to lower default exposure while keeping loan decisions fast. A loan officer no longer reviews most cases. The system assigns a probability and pushes the outcome into a core banking workflow.

In practice, the model only knows past behavior. It sees a missed payment, a balance spike, or a short credit file. It does not know about a hospital stay or a delayed insurance payout. Studies from the Federal Reserve have shown that medical debt creates irregular payment patterns that look like financial distress but do not match long term income stability. Those patterns remain inside the training data, so the model absorbs them and treats them as lasting signals.

Banks run into adoption friction here. When regulators ask for denial explanations, the system outputs labels like insufficient payment history or elevated revolving balance. Those labels are technically accurate but empty. They give no room for personal explanation. Teams then build manual override steps, which slow processing and raise compliance costs. They give no room for personal explanation. Teams then build manual override steps, which slow processing and raise compliance costs.

The secondary keyword automated decision systems shows up here in its raw form. These platforms decide outcomes without narrative. They handle volume, not nuance. Scaling them means accepting that edge cases fade out of view.

Fraud Detection Platforms That Flag Anomalies Without Situational Awareness

Payment networks use tools such as Stripe Radar, Feedzai, and Sift to block fraudulent activity in real time. The operational need is obvious. Card networks face chargeback penalties and regulatory pressure. Each transaction gets scored against patterns drawn from billions of prior payments.

A spending spike, a new device, or a location shift raises the risk score. Many times that is right. Other times it is a traveler buying groceries after a long flight or a person replacing a stolen phone. The system blocks the charge. A text message then asks the cardholder to confirm identity.

Research from the University of Cambridge on financial crime detection found that false positives stay high even in mature systems. The models lack situational memory. They do not track personal life changes. They only see that the pattern moved.

Support teams feel the cost. They field complaints and submit rule change requests. Those changes can weaken fraud capture. Data freshness lags behind real life, and customers pay for that gap.

This shows another limit of ai risk assessment. It treats deviation as danger. It has no sense of ordinary change. The second mention of automated decision systems fits here, sitting between people and money without any grasp of context.

Hiring Algorithms That Rate Instability Instead Of Career Complexity

Applicant tracking systems now integrate screening engines such as HireVue and Pymetrics. Large employers use them to rank thousands of resumes and recorded interviews. The operational aim is to filter quickly and reduce recruiter subjectivity.

These systems score speech patterns, personality signals, and employment history. A long gap or a shift in job type lowers the score. Research from the AI Now Institute found that career breaks correlated with weaker model ratings even when later performance was strong. The system never saw caregiving years or retraining efforts. It only saw irregularity.

Recruiting teams struggle with this. When they override scores, the model retrains. That retraining can tilt results if only certain profiles get rescued. A quiet form of exclusion takes shape inside the data loop.

This is where algorithmic bias grows. Not through intent, but through missing context. The model reads stability as sameness. Human careers do not behave that way.

Content Moderation Engines That Confuse Harm With Messy Language

Social platforms rely on automated moderation systems to flag risk in posts, comments, and images. Tools built on large language models score content for toxicity, misinformation, or policy violations. The operational demand is scale. Millions of posts per hour require review.

A sarcastic remark or reclaimed slur can trigger a flag. The system has no sense of community norms. Research from the Electronic Frontier Foundation documented how activist groups using coded language were penalized by moderation bots. Their posts matched harmful patterns without harmful intent.

Moderation teams then face queues filled with false positives. They clear them by hand, often after long delays. Users get hidden or removed in the meantime. Context arrives after the damage.

This marks the third appearance of automated decision systems in a different setting. The pattern holds. The model detects a signal. It cannot read the scene around it.

Conclusion

Risk scoring without context turns probability into judgment. Across finance, hiring, payments, and online speech, the same structure repeats. A model trained on past data labels deviation as danger. Research keeps pointing to the same blind spot. Statistical patterns cannot carry personal stories. Teams build review layers to patch that gap, yet scale pushes those layers aside. People end up treated not as individuals with shifting lives, but as frozen snapshots of old behavior.