Artificial intelligence now sits inside credit scoring, hiring filters, medical triage, and ad delivery. Those systems make choices that touch real people. AI transparency sounds simple, yet the ground shifts once software leaves the lab. Logs exist. Metrics appear. Some steps stay hidden. Others never existed in the first place. Research from MIT, DeepMind, and the Alan Turing Institute keeps pointing to the same reality. Parts of these systems can be traced. Parts remain opaque, even to the teams building them.

Gradient Based Neural Networks In Medical Risk Scoring Systems

Hospitals rely on deep neural networks to flag sepsis, stroke risk, and patient deterioration. These models use thousands of parameters trained on years of clinical records. Engineers can inspect weights, gradients, and loss curves. That data shows how the system adjusted during training. It does not show why a patient received a high risk score at 2:14 a.m.

Clinicians want reasons. Was it blood pressure, lab results, age, or a rare interaction across many signals. Researchers from Stanford and Oxford have shown that gradient based models compress patterns into high dimensional spaces that do not map cleanly to medical concepts. Techniques tied to model explainability, such as saliency maps and feature attribution, attempt to surface which inputs influenced a prediction. In practice those maps often shift when the same patient record is slightly perturbed. That instability limits clinical trust.

Hospitals running these tools face operational limits. Real time predictions must run under strict latency budgets. Adding heavy interpretability layers slows response time. Clinical data streams arrive with missing fields, stale values, and sensor noise. Even perfect transparency on paper becomes fragile in the ward. AI transparency here means offering partial signals rather than full causal stories.

Large Language Models Used In Legal Document Review Platforms

Law firms now push millions of contracts through language models to surface risk clauses and regulatory gaps. The model reads, scores, and flags text in seconds. Partners ask how it reached a given label. Engineers can show token probabilities, attention maps, and training corpus composition. None of that explains why a paragraph was marked as risky in a way a lawyer can act on.

Research from Anthropic and OpenAI shows that these models form internal representations that do not correspond to legal categories. A clause about indemnity may trigger similar neurons as a clause about jurisdiction.

Tools built around model explainability can highlight which phrases carried weight, though those highlights shift when formatting changes or when the document contains scanned text rather than digital copies.

Reinforcement Learning Engines Controlling Warehouse Robotics

Modern warehouses use reinforcement learning to route robots through aisles, manage inventory, and avoid collisions. The policy network receives sensor feeds and outputs movement commands. Engineers can inspect reward curves and policy updates. Those numbers show training health. They do not show why a robot blocked an aisle for thirty seconds during peak hours.

Studies from Berkeley and DeepMind show that reinforcement learning policies encode behavior in ways that resist human-readable rules. Local explanations can be generated by probing the state space around a single decision. Those probes work in simulation. They struggle on the warehouse floor, where lighting shifts, sensors drift, and physical obstacles appear.

Recommendation Algorithms In Large Scale Content Delivery Networks

Streaming services and news platforms rely on recommendation engines to shape what people see. These systems blend collaborative filtering, embeddings, and real time feedback loops. Engineers track click rates, dwell time, and embedding drift. Those metrics show performance. They do not show why a specific article appeared for a specific viewer at a specific moment.

Academic work from Meta and the University of Amsterdam shows that small changes in user behavior can shift recommendation trajectories across hours. Explanations built on model explainability tools can highlight which past interactions mattered. They cannot account for the cascade of other users interacting with the same content in parallel.

Content teams face editorial handoff delays. When an article is flagged as sensitive, the algorithm may still push it for several minutes while caches update. That lag creates a window where explanations no longer match output. AI transparency here is bounded by distributed systems physics, not just math.

Financial Credit Models Running Under Regulatory Audit

Banks use machine learning to approve loans, set limits, and detect fraud. Regulators demand traceable decisions. Teams deploy gradient boosted trees, neural networks, and hybrid stacks. Some parts remain interpretable. Others do not. Model cards and audit logs document training data, version numbers, and validation scores.

Research from the Federal Reserve and the Bank of England shows that complex models reduce bias in some datasets while creating new blind spots in others. Tools tied to model explainability provide feature attributions for each loan decision. Those attributions can be misleading when features interact. A small income change may flip the model even though income alone appears minor.

What Can Be Explained And What Stays Out Of Reach

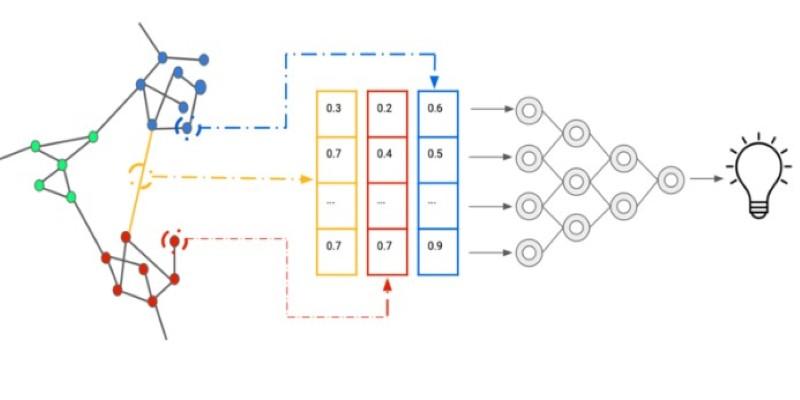

Across these domains a pattern appears. Training processes can be logged. Inputs and outputs can be stored. Local influences can be approximated. Internal reasoning inside deep models remains fragmented. Research from the Alan Turing Institute shows that neurons often represent blended concepts that do not align with human categories.

That gap does not stem from secrecy. It comes from how these systems compress information. When millions of parameters adjust together, causality spreads across the network. AI transparency then covers provenance, data lineage, and decision context. It does not deliver full narratives of internal thought.

Teams still need something they can defend. They rely on audit trails, counterfactual testing, and post hoc analysis. A rejected loan can be rerun with adjusted income to see how outcomes shift. A medical alert can be replayed with altered vitals. These methods give operational confidence even when internal states remain opaque.

Conclusion

AI transparency sits between what software can log and what mathematics hides. Deep learning, reinforcement learning, and large language models all compress patterns beyond direct human reading. Teams build layers of reporting, auditing, and explanation on top of that core. Those layers support trust and compliance. They never reveal everything. Research keeps narrowing the gap, yet some opacity remains baked into the architecture. That reality shapes how these tools are governed, deployed, and questioned in real operations.