AI systems are often treated as if they behave like steady machines. Teams expect similar tasks to produce similar results. That assumption breaks quickly in real use. One chatbot drafts a clean contract clause, then stumbles on a near copy. A vision model tags product photos one day and mislabels the next batch. These gaps rarely come from random failure. They grow out of how data flows, how models were trained, and how the surrounding systems make decisions.

Training Data Boundaries Inside Large Language Models

Large language models operate inside strict data limits, even if the interface feels open. Each system reflects the texts it absorbed during training, filtered through weighting rules and safety layers. When a support team asks the same model to answer billing questions and then legal questions, the surface similarity hides a wide difference in how much high quality data exists in each area.

Research from Stanford’s HELM benchmark shows that accuracy swings widely across domains even when the prompts look alike. A model can score high on open domain trivia while failing on contract interpretation or medical phrasing. The training sets behind those areas vary in size, clarity, and structure. Public web text offers millions of examples of casual writing but far fewer clean samples of regulated language.

This becomes visible inside real tools. A content team using an enterprise chatbot to draft marketing copy often sees stable tone and grammar. The same system asked to rewrite product warranties produces odd phrasing or missing clauses. The issue is not reasoning. It is exposure. The model has seen many ads and far fewer legal templates.

These gaps drive AI performance variability even when the task looks identical. Two rewriting jobs may share a format but rely on different parts of the model’s internal map. One sits on dense data. The other rests on thin ice.

Evaluation Layers Used By Support Automation Platforms

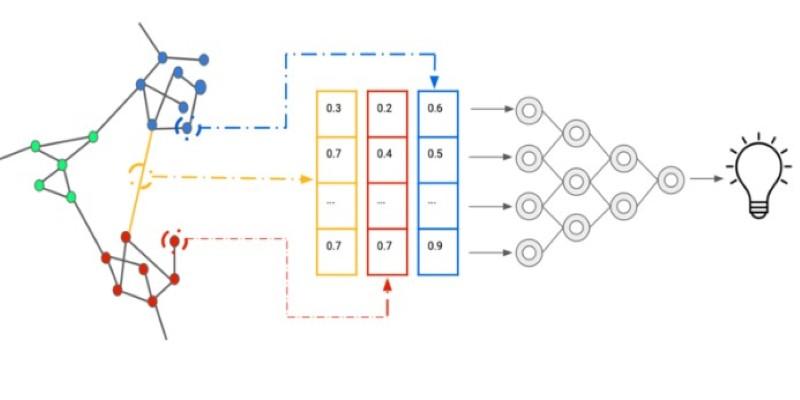

Support automation platforms rely on several AI systems working together. One model interprets the customer message. Another pulls a knowledge base article. A third writes the reply. Each step has its own scoring and routing logic. When any layer misfires, the full output shifts.

Zendesk and Intercom both publish research on intent detection drift. A user might ask, “I was charged twice,” and the model routes it to billing. A similar message, “I see two charges,” may land in general support. The language is close. The labels differ.

This affects how replies look. Billing flows often include templates with refund policies. General support replies sound softer and less specific. Teams reviewing transcripts often assume the writing model failed. The deeper cause sits in the classification layer.

Real usage shows this clearly. A retail brand running automated chat sees consistent responses for shipping delays. Refund requests swing in tone and detail. The knowledge base articles behind refunds update more often, and the intent classifier has a higher error rate there. The writing model receives different inputs, so the final message varies.

Limits add friction. Knowledge bases have stale pages. API calls time out. Escalation rules block certain answers. These constraints shape the output far more than most users realize, and they explain why similar tickets can get uneven replies.

Vision Model Pipelines In Product Tagging Systems

Image recognition tools look steady when tested on sample sets. Live product feeds tell a different story. Retail platforms using Google Vision or AWS Rekognition run photos through resizing, background removal, and compression before analysis. Each step alters what the model sees.

Research from MIT on image preprocessing shows that small changes in contrast or cropping shift label confidence by large margins. A shoe photo with a white background might get tagged as footwear. The same shoe with shadows or packaging may trigger a sports label or an accessory label.

Teams running catalog automation face this daily. A fashion marketplace uploads vendor photos in mixed formats. Some arrive as studio shots. Others are phone images. The tagging model is the same. The pipeline is not. One batch produces clean attributes. Another floods the system with manual review flags.

Scaling adds more strain. Rate limits force some images to be queued or downsampled. That lowers resolution and removes fine detail. The model never sees the same thing twice, even when the product is identical. That leads to AI performance variability that looks mysterious unless the full pipeline is visible.

Developer Tooling Built On Code Generation Models

Code assistants show a sharp version of this pattern. GitHub Copilot or similar tools can write a working function for one file and then stumble in the next file using the same library. The codebase context changes the model’s behavior.

Research from Microsoft on code completion found that token window limits shape output more than language complexity. When a file exceeds the context window, older functions fall out of view. The model guesses based on partial patterns. A small utility file works fine. A large service file leads to mismatched variables and broken imports.

Teams feel this when working in monorepos. A developer asks the assistant to add a logging hook. In one service, it follows the existing style. In another, it invents a new logger. The prompt is the same. The visible code is not.

Latency and API limits add more noise. Some environments batch requests. Others stream them. When the tool times out, it returns shorter or truncated code. Developers often blame the model. The system around it caused the change.

These factors stack together. Training data, context limits, and infrastructure all press on the output. The result is uneven performance that tracks system design more than raw model quality.

Conclusion

AI systems look consistent on the surface. Underneath, they run on layered decisions, uneven data, and fragile pipelines. Similar tasks trigger different internal paths, and those paths carry their own blind spots. Research across language, vision, and support automation shows that small shifts in input or routing produce large swings in output. Teams that treat these tools as simple engines miss the real cause of variation. The work happens inside the plumbing, not the interface.